Few days ago I received an error report about a number of file download failures on one of the applications that I was working on. And after some troubleshooting effort, I found out that the failures happened only on files with size ~2Gb or greater. A colleague also dug up this important clue in one of the log files: failed to allocate memory, but there was no stack trace to be found. Great start.

After scanning the application code base, I narrowed the potential culprit down to Swagger CodeGen-generated Ruby client, a couple of layers underneath. It’s time to reproduce the error locally and monitor resource consumption with good ol’ dynamic duo of ps and gnuplot.

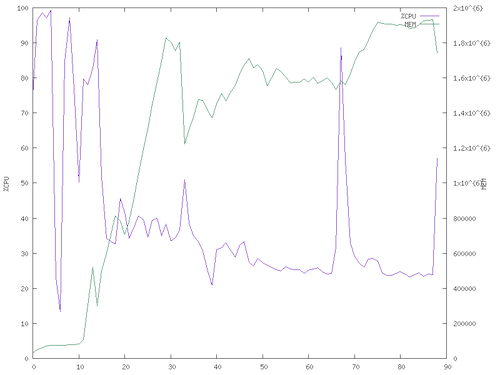

Result! The chart below sampled CPU and memory usage every half a second during a 4Gb file download, which broke with failed to allocate memory error at the end, and that’s where the memory usage reached 2Gb (the peak of the green line below before dropping) before crashing.

Time to dive into Swagger CodeGen code base, I found this line in Ruby api_client.mustache which verified my suspicion that the whole downloaded file was buffered in memory and stored in the response body.

file.write(response.body)

I refactored the file download implementation to stream the response body instead, essentially writing the file in chunks. Here’s a small part of the change that illustrates the different approach, this is on_body request callback which encodes and writes the file a chunk at a time.

request.on_body do |chunk|

chunk.force_encoding(encoding)

file.write(chunk)

end

Time to return to ps and gnuplot.

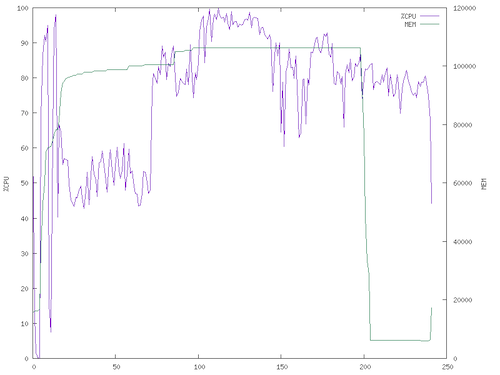

Success! The 4Gb file was downloaded successfully and memory usage (green line on the above chart) was limited to slightly above 100Mb. There’s an increase in CPU usage from encoding the chunks, which is required since the chunk’s encoding can’t be guaranteed to be the same as the downloaded file’s intended encoding. This is still a necessary tradeoff because this means that the Ruby client can now handle large files. Streaming FTW!

You can follow this topic on GitHub issue #5704.